Digital Twins: Helping Your Home and Bamboo Reach Their Full Potential

From smart homes to Smart Cities, discover the hidden potential of Digital Twin technology. Welcome to an exciting journey into the world

Platform Engineer at ARQ Group

The pace of change and accelerated arrival of Industry 4.0 has forced many areas of business and society in general to forcibly (and quickly) adjust to this new digital way of working. COVID takes some responsibility, but the movement was already underway.

‘Digital Twins’ as a buzz-word is being used across all industries, so this brief introduction to how we see it being used now and into the future will help explain what it really means, and how it can add tangible business value to you.

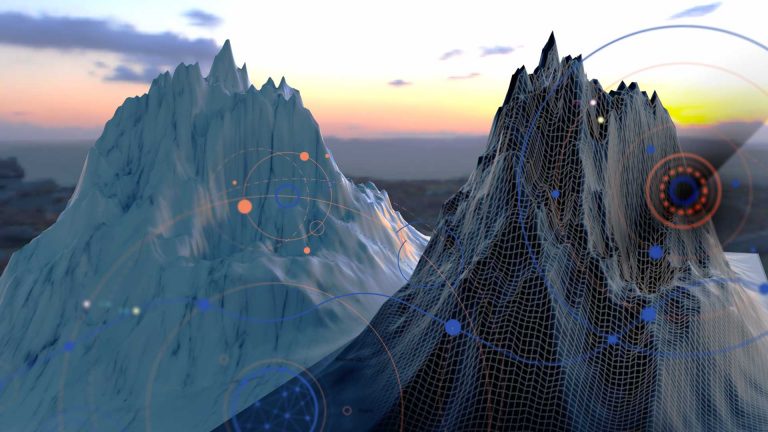

Digital Twins as a term was most notably used by NASA in the early 2000’s as “a virtual representation in virtual space of a physical structure in real space and the information flow between the two that keeps the former synchronized with the latter” when designing future rockets, before so much as a screwdriver was picked up to design and model how various systems would best work with each other.

The most simplistic explanation for a Digital Twin is that it can allow you to have a dashboard-level view of your plant, process or operation wherever you are, as a digital overlay of those components. More than just remote camera or sensor monitoring, a Digital Twin becomes an engaged agent, following rules you set to look out for issues even before they happen, to continually learn from and advise on your whole connected ecosystem, and (potentially) actively intervene to avoid dangerous and/or costly issues from occurring. Further, that intelligence can be used to model ‘what-if’ scenarios to help build better, more economically viable, safer and environmentally cleaner processes.

While still evolving, in more recent implementations it is being used at very small scale levels of cellular analysis by biochemists right through to city and country-wide scales where everything from water and power utilities are using man-made structures and naturally occurring features are being modelled and tracked with IoT sensors, along with what is becoming the most typical type of implementation in manufacturing process monitoring or facilities management. This type of ‘smart’ integration was the first step into developing a Digital Twin, and when combined with the often significant amount of historical and streamed data that has become so prevalent in our modern world, Digital Twins is the collection and aggregation point to begin to make sense of that ocean of data and real-world telemetry that is now at our disposal, and begin to make it work for us.

Data on its own is, however, ultimately useless if it is not used, and the art of knowing what is relevant is where the true value of Digital Twins lies. It is more than just having the set of every sensor out there, it’s knowing which ones are providing that useful insights or giving you that critical early warning.

There is a catch: attempting to build a large number of complex processes and capture points can take a very long time. While keeping that end-state in mind, the first approach should be to build a thin sliver that has a discrete, demonstrable positive impact and show value, and integrated into existing work practices and environments without significant disruption. This limiting is what will help provide the relevant template for your business or industry and help move the Digital Twin concept from being one that is heavily bespoke and specific to its implementation, to become a question of patterns that can be re-used, the more examples are realised. This will have the effect of commoditising the approach, making it more common while democratising the intelligence and deep knowledge that continues to evolve as your business does.

For most cases, this initial implementation is a 3-6 month window where an outcome can be proven, at a cost around $50k. Once this value is clear, the options and possibilities for further enhancement and prioritising them as relevant becomes clearer, and allows for taking advantage of any new advancements in what is a highly dynamic and changeable digital space.

James Litjens is the Director of Emerging Technologies at ARQ Group. When James isn't leveraging tech for clients or delving into what's hot, he's building his own mobile apps, competing in triathlons and playing the drums in his apartment (at 1 am). Ever-so-considerate, James wears headphones when playing his electric drums. James' real drum kit is stored in a secret location with no neighbours. You can reach James at: james.litjens@arq.group

From smart homes to Smart Cities, discover the hidden potential of Digital Twin technology. Welcome to an exciting journey into the world

The words ‘cloud cost optimisation’ may be enough to make any CFO and CIO’s heart skip a beat in a world where

How do you bring together a geographically distributed workforce across the world for a major corporate event? Metaverse – the latest buzzword